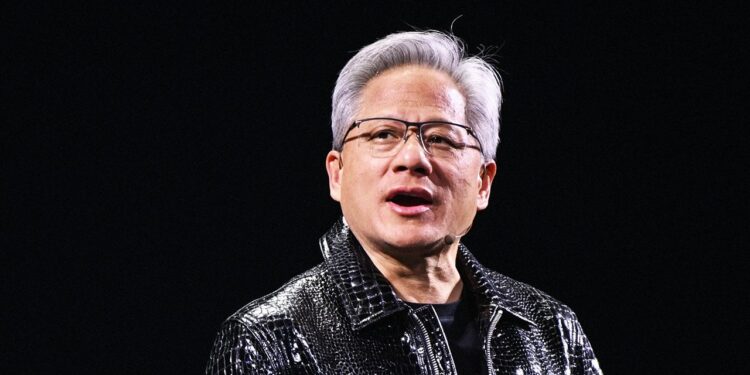

Nvidia CEO Jensen Huang says that the corporate’s next-generation AI superchip platform, Vera Rubin, is on schedule to start arriving to clients later this yr. “At the moment, I can inform you that Vera Rubin is in full manufacturing,” Huang stated throughout a press occasion on Monday on the annual CES know-how commerce present in Las Vegas.

Rubin will lower the price of running AI models to about one-tenth of Nvidia’s present main chip system, Blackwell, the corporate informed analysts and journalists throughout a name on Sunday. Nvidia additionally stated Rubin can practice sure giant fashions utilizing roughly one-fourth as many chips as Blackwell requires. Taken collectively, these positive aspects may make superior AI programs considerably cheaper to function and make it tougher for Nvidia’s clients to justify shifting away from its {hardware}.

Nvidia stated on the decision that two of its current companions, Microsoft and CoreWeave, can be among the many first corporations to start providing companies powered by Rubin chips later this yr. Two main AI information facilities that Microsoft is at present constructing in Georgia and Wisconsin will finally embody 1000’s of Rubin chips, Nvidia added. A few of Nvidia’s companions have began operating their next-generation AI fashions on early Rubin programs, the corporate stated.

The semiconductor large additionally stated it’s working with Crimson Hat, which makes open supply enterprise software program for banks, automakers, airways, and authorities businesses, to supply extra merchandise that can run on the brand new Rubin chip system.

Nvidia’s newest chip platform is known as after Vera Rubin, an American astronomer who reshaped how scientists perceive the properties of galaxies. The system consists of six completely different chips, together with the Rubin GPU and a Vera CPU, each of that are constructed utilizing Taiwan Semiconductor Manufacturing Firm’s 3-nanometer fabrication course of and essentially the most superior bandwidth reminiscence know-how out there. Nvidia’s sixth-generation interconnect and switching applied sciences hyperlink the varied chips collectively.

Every a part of this chip system is “utterly revolutionary and the most effective of its sort,” Huang proclaimed through the firm’s CES press convention.

Nvidia has been growing the Rubin system for years, and Huang first introduced the chips had been coming throughout a keynote speech in 2024. Final yr, the corporate stated that programs constructed on Rubin would start arriving within the second half of 2026.

It’s unclear precisely what Nvidia means by saying that Vera Rubin is in “full manufacturing.” Usually, manufacturing for chips this superior—which Nvidia is constructing with its longtime accomplice TSMC—begins at low quantity whereas the chips undergo testing and validation and ramps up at a later stage.

“This CES announcement round Rubin is to inform buyers, ‘We’re on observe,’” says Austin Lyons, an analyst at Artistic Strategists and writer of the semiconductor trade publication Chipstrat. There have been rumors on Wall Avenue that the Rubin GPU was operating delayed, Lyons says, so Nvidia is now pushing again by saying it has cleared key growth and testing steps, and it’s assured Rubin continues to be on the right track to start scaling up manufacturing within the second half of 2026.

In 2024, Nvidia needed to delay supply of its then-new Blackwell chips because of a design flaw that precipitated them to overheat after they had been linked collectively in server racks. Shipments for Blackwell had been again on schedule by the center of 2025.

Because the AI trade quickly expands, software program corporations and cloud service suppliers have needed to fiercely compete for entry to Nvidia’s latest GPUs. Demand will possible be simply as excessive for Rubin. However some corporations are additionally hedging their bets by investing in their very own customized chip designs. OpenAI, for instance, has stated it’s working with Broadcom to construct bespoke silicon for its subsequent era of AI fashions. These partnerships spotlight a longer-term threat for Nvidia: Prospects that design their very own chips can acquire a stage of management over their {hardware} that the corporate doesn’t supply.

However Lyons says at this time’s bulletins show how Nvidia is evolving past merely providing GPUs to turning into a “full AI system architect, spanning compute, networking, reminiscence hierarchy, storage, and software program orchestration.” At the same time as hyperscalers pour cash into customized silicon, he provides, Nvidia’s tightly built-in platform “is getting tougher to displace.”