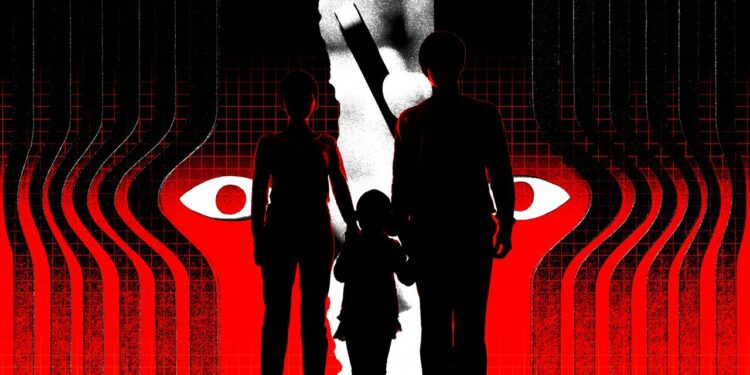

1000’s of males are members of Telegram teams and channels that publicize and promote hacking and surveillance providers that can be utilized to harass buddies, wives and girlfriends, and former companions, new analysis has uncovered. The findings, from a European nonprofit group, additionally say that the communities are concerned in in depth buying and selling, promoting, and promotion of an enormous number of abusive content material, together with nonconsensual intimate pictures of girls, so-called nudifying services, plus folders of pictures that sellers declare embody baby sexual abuse materials and depictions of incest and rape.

Over six weeks earlier this yr, researchers on the algorithmic auditing group AI Forensics analyzed nearly 2.8 million messages despatched throughout 16 Italian and Spanish Telegram communities which can be usually posting abusive content material concentrating on girls and women. Greater than 24,000 members of the Telegram teams and channels took half in posting 82,723 pictures, movies, and audio information over the course of the research, the evaluation says. Many posts goal celebrities and influencers, however males within the teams additionally incessantly victimize girls they know.

“We are likely to neglect that almost all victims are abnormal girls who generally don’t even know that their photos are shared or manipulated in these kind of channels,” says Silvia Semenzin, a researcher at AI Forensics who beforehand uncovered Italian Telegram channels participating in comparable habits as far back as 2019. “Nearly all of this violence is directed in the direction of individuals who the perpetrators know,” she says, suggesting that Telegram, which has over 1 billion month-to-month lively customers, according to firm founder Pavel Durov, ought to be topic to stricter regulation and classed as a “very giant on-line platform” beneath Europe’s online safety rules.

The findings come as Durov is fighting back towards Russia’s efforts to dam the messaging app in that nation, which has lengthy positioned itself as a messaging app that enables free speech however has concurrently been used by some to share terrorist, sexual abuse, and cybercrime materials. Durov is under criminal investigation in France regarding alleged legal exercise happening on Telegram, though he has constantly denied the allegations.

A Telegram spokesperson tells WIRED that the corporate removes “thousands and thousands” of items of content material per day utilizing “customized AI instruments” and has policies in Europe that don’t enable the promotion of violence, unlawful sexual content material together with nonconsensual imagery, and different content material equivalent to doxing and promoting unlawful items and providers.

Among the many in depth forms of abusive content material and providers noticed by the AI Forensics researchers have been frequent references to the entry, publishing, and doxing of girls’s personal info, sharing their Instagram or TikTok content material, in addition to references to spying or hacking. “Victims are sometimes named, tagged, and locatable by way of shared profile hyperlinks,” the group’s report says.

One translated put up on Telegram titled “Skilled hacking on fee” claimed to have the ability to give clients “entry to cellphone gallery and extraction of photographs and movies,” in addition to “nameless social media hacking.” One other message says: “I hack and get well any kind of social media service. I can spy in your associate’s account. Ship me a non-public message.”

Throughout the dataset there have been greater than 18,000 references to spying or spy content material. One put up reads: “Hello, do you might have the need to spy on a woman’s gallery? We promote a bot that does it for information DM.” In the meantime, customers have been noticed asking if individuals might discover cellphone numbers related to Instagram accounts and different requests, “who exchanges spy photographs and movies?”