The unique model of this story appeared in Quanta Magazine.

Giant language fashions work nicely as a result of they’re so giant. The newest fashions from OpenAI, Meta, and DeepSeek use a whole lot of billions of “parameters”—the adjustable knobs that decide connections amongst information and get tweaked through the coaching course of. With extra parameters, the fashions are higher capable of determine patterns and connections, which in flip makes them extra highly effective and correct.

However this energy comes at a value. Coaching a mannequin with a whole lot of billions of parameters takes large computational assets. To coach its Gemini 1.0 Extremely mannequin, for instance, Google reportedly spent $191 million. Giant language fashions (LLMs) additionally require appreciable computational energy every time they reply a request, which makes them infamous power hogs. A single question to ChatGPT consumes about 10 times as a lot power as a single Google search, in accordance with the Electrical Energy Analysis Institute.

In response, some researchers at the moment are pondering small. IBM, Google, Microsoft, and OpenAI have all lately launched small language fashions (SLMs) that use a number of billion parameters—a fraction of their LLM counterparts.

Small fashions should not used as general-purpose instruments like their bigger cousins. However they’ll excel on particular, extra narrowly outlined duties, akin to summarizing conversations, answering affected person questions as a well being care chatbot, and gathering information in sensible gadgets. “For lots of duties, an 8 billion–parameter mannequin is definitely fairly good,” mentioned Zico Kolter, a pc scientist at Carnegie Mellon College. They’ll additionally run on a laptop computer or cellular phone, as a substitute of an enormous information heart. (There’s no consensus on the precise definition of “small,” however the brand new fashions all max out round 10 billion parameters.)

To optimize the coaching course of for these small fashions, researchers use a number of tips. Giant fashions typically scrape uncooked coaching information from the web, and this information might be disorganized, messy, and arduous to course of. However these giant fashions can then generate a high-quality information set that can be utilized to coach a small mannequin. The strategy, known as information distillation, will get the bigger mannequin to successfully cross on its coaching, like a instructor giving classes to a scholar. “The explanation [SLMs] get so good with such small fashions and such little information is that they use high-quality information as a substitute of the messy stuff,” Kolter mentioned.

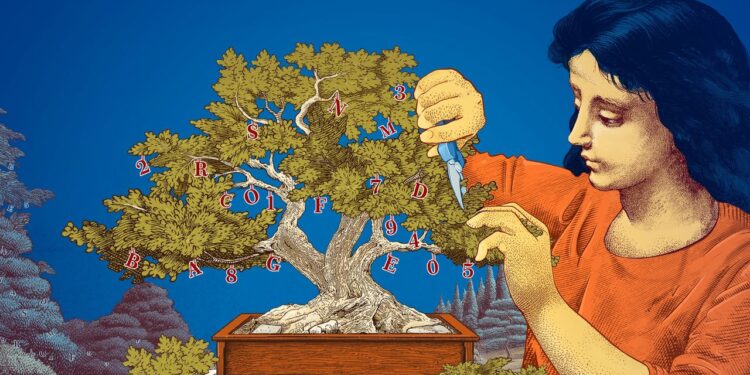

Researchers have additionally explored methods to create small fashions by beginning with giant ones and trimming them down. One technique, referred to as pruning, entails eradicating pointless or inefficient elements of a neural network—the sprawling net of related information factors that underlies a big mannequin.

Pruning was impressed by a real-life neural community, the human mind, which positive factors effectivity by snipping connections between synapses as an individual ages. At the moment’s pruning approaches hint again to a 1989 paper wherein the pc scientist Yann LeCun, now at Meta, argued that as much as 90 p.c of the parameters in a skilled neural community may very well be eliminated with out sacrificing effectivity. He known as the tactic “optimum mind injury.” Pruning will help researchers fine-tune a small language mannequin for a selected job or surroundings.

For researchers excited about how language fashions do the issues they do, smaller fashions supply a cheap technique to take a look at novel concepts. And since they’ve fewer parameters than giant fashions, their reasoning is likely to be extra clear. “If you wish to make a brand new mannequin, you have to strive issues,” mentioned Leshem Choshen, a analysis scientist on the MIT-IBM Watson AI Lab. “Small fashions permit researchers to experiment with decrease stakes.”

The large, costly fashions, with their ever-increasing parameters, will stay helpful for functions like generalized chatbots, picture turbines, and drug discovery. However for a lot of customers, a small, focused mannequin will work simply as nicely, whereas being simpler for researchers to coach and construct. “These environment friendly fashions can lower your expenses, time, and compute,” Choshen mentioned.

Original story reprinted with permission from Quanta Magazine, an editorially impartial publication of the Simons Foundation whose mission is to reinforce public understanding of science by protecting analysis developments and developments in arithmetic and the bodily and life sciences.